TriggerDagRunOperator even though functions like the REST API’s create a DAG run, but can implement a conditional check to wait if a DAGRun is already active for the child DAG. However, REST API’s create a DAGrun is similar to TriggerDagRunOperator.īased on the above info, you can see that ExternalTaskSensor is a conditional sensor task whereas using REST API’s Trigger will create a new DAG run without any conditional check.

ExternalTaskSensor is not applicable across Airflow environments, hence REST API is a good choice if your use-case requires that.

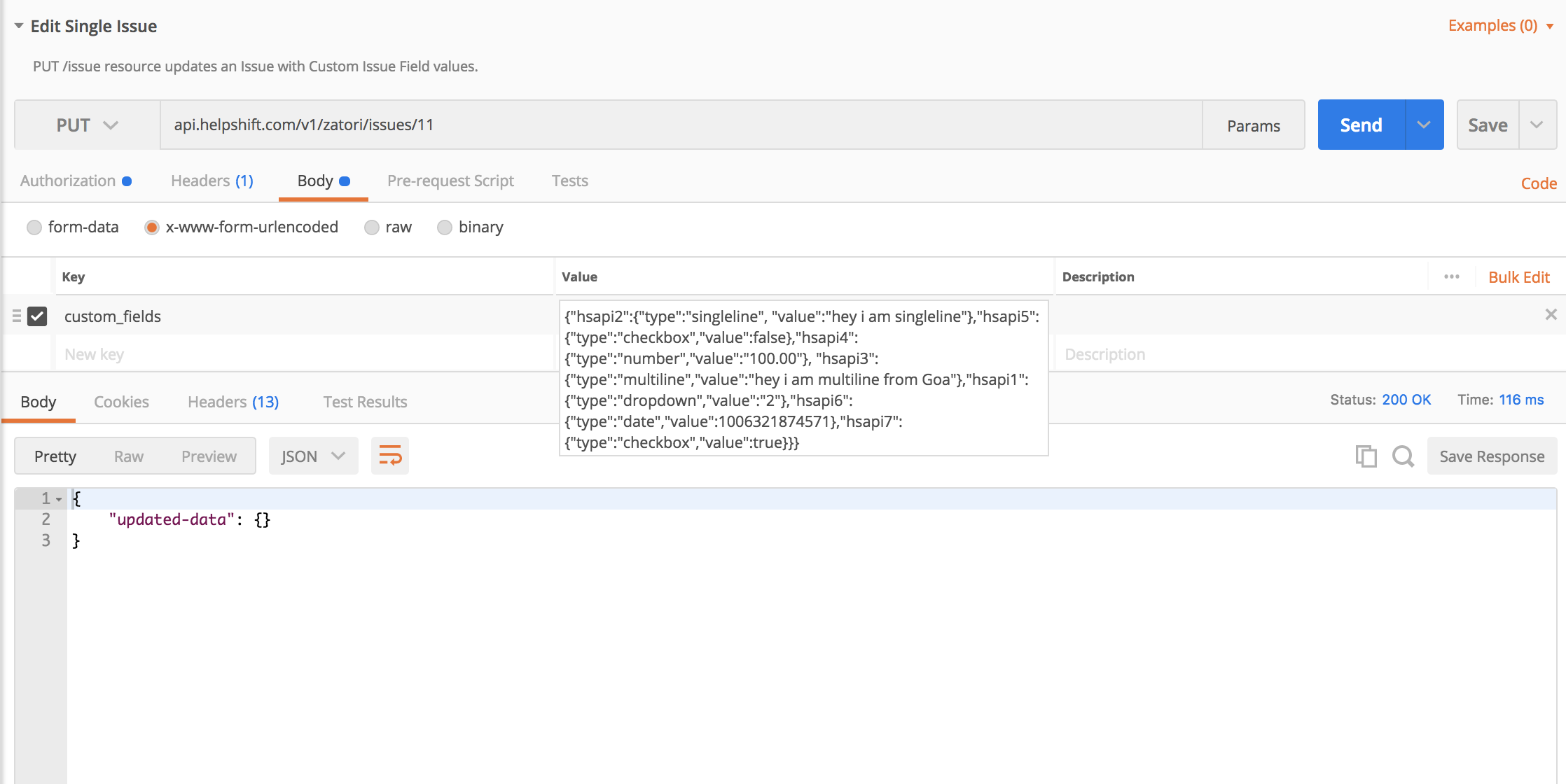

See Airflow docs for other parameters and Astronomer docs on how to use this.Īirflow REST API Trigger is particularly useful to setup dependencies across different Airflow environments. Another useful parameter of this sensor task is check_for_existence, which when set to True will not unnecessarily wait if the DAG or task don’t exist. You can also verify the execution_date that the sensor is poking in the logs. Then, your ExternalTaskSensor should look like: check_dag_a_task_1 = ExternalTaskSensor( For example, if you want to trigger DAG B ( schedule_interval= 0 1 * * * ) only on the success of Task 1 of DAG A ( schedule_interval= 0 0 * * * ). The resulting schedule_interval should be equal to the parent DAG’s schedule_interval for sensor condition to be satisfied. When using execution_delta, the value you give using timedelta(hours=1) is subtracted from your child DAG’s schedule_interval. If they aren’t, you can use either execution_delta or execution_date_fn, but not both. One important factor when using this sensor is that the schedule_interval of the parent DAG and child DAG should be same. show originalĮxternalTaskSensor is used mainly for cross-DAG dependencies in the same Airflow environment based on task states. The token generated upon log in at `` (or `app.BASEDOMAIN/token` for enterprise users) can be used, but it is recommended that any workflows hitting the API use a long lived service account token. A list of supported endpoints can be found on the ().Įach call to the Airflow API on Astronomer will need to contain an authentication token. astronomer/docs/blob/airflow_api/v0.13/airflow-api-on-astronomer.md -ĭescription: "Using the Airflow API on Astronomer"Īirflow exposes a handful of endpoints through the webserver as part of its experimental REST API. curl -v -X POST https ://AIRFLOW_DOMAIN/api/experimental/dags/airflow_test_basics_hello_world/dag_runs -H -H 'Authorization: ’ -H ‘Cache-Control: no-cache’ -H ‘content-type: application/json’ -d ‘’īecause this is just a cURL command executed through the command line, this functionality can also be replicated in a scripting language like Python using the Requests library.Using an entry in the Astronomer Forums (https :///t/hitting-the-airflow-api-through-astronomer/44), we start out with a generic cURL request:.For more information, the docs are available here: https :///api.html In this example, we’ll be using the dag_runs endpoint, which lets you trigger a DAG to run. Note down the token, because you won’t be able to view it again.Click “Service Accounts” and click “New Service Account”: Here are the steps:Ĭreate service account in Astronomer deployment. With Astronomer, you just need to create a service account to use as the token passed in for authentication. The links are broken because new users can only put 2 links in a post apparently.Īirflow exposes what they call an “experimental” REST API, which allows you to make HTTP requests to an Airflow endpoint to do things like trigger a DAG run. Here’s what I wrote in our own Confluence page around using the REST API.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed